摘要

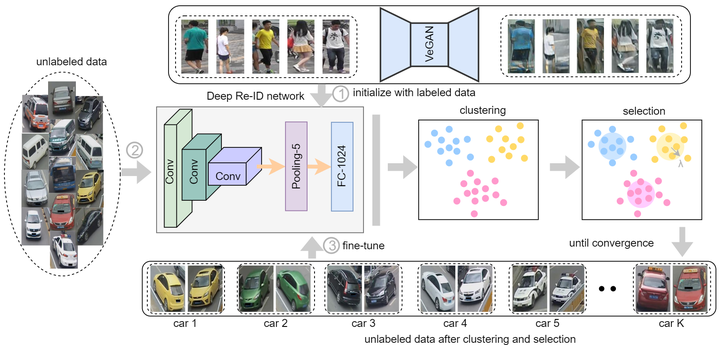

The superiority of deeply learned representation has been reported in very recent literature of re-identification (Re-ID) task. In this paper, we study a novel transfer learning problem termed Distant Domain Transfer Learning (DDTL) for Re-ID task. Different from existing transfer learning problems which assume that there is a close relation between source domain and target domain, in the DDTL problem, target domain can be totally different from source domain. For example, the source domain classifies pedestrian images but the target domain distinguishes vehicle images. In this work, our goal is to execute an unseen and unrelated task based on a labeled dataset training previously without any samples from intermediate domains. Particularly, we consider the more pragmatic issue of learning a deep feature with no labels, and propose a Deep Unsupervised Progressive Learning (DUPL) method to transfer pretrained deep representations to unseen domains. Specifically, our work performs clustering and fine-tuning of the CNN to improve the performance of original model trained on the irrelevant labeled dataset. Empirical studies on distant domain adaptation task (pedestrian -> vehicle) demonstrate the effectiveness of the proposed method, and the improvement in terms of the mAP accuracy is up to 15% over “non-transfer” methods.